<强>

<强>

<强> <强>

<强> <强>

<强> <强>

hdfs-site.xml

& lt; property>

,,,& lt; name> dfs.permissions

,,,& lt; value> false

& lt;/property>

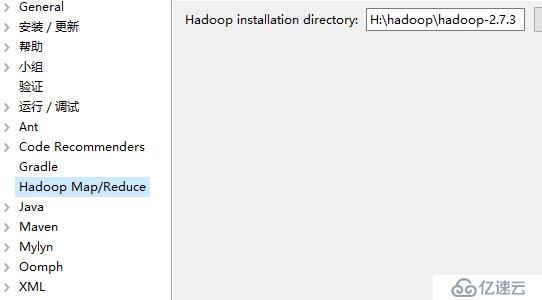

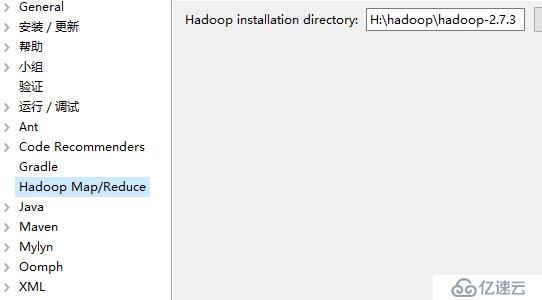

<强> <强>

<强> <强>

<强> <强>

<强> <强>

,,& lt; dependencies>

,,,& lt; dependency>

,,,,& lt; groupId> org.apache.hadoop

,,,,& lt; artifactId> hadoop-client

,,,,& lt; version> 2.7.3

& lt;/dependency>

,,,& lt; dependency>,,

,,,,,& lt; groupId> junit

,,,,,& lt; artifactId> junit

,,,,,& lt; version> 3.8.1

,,,,,& lt; scope> test

,,,& lt;/dependency>

& lt;才能/dependencies>

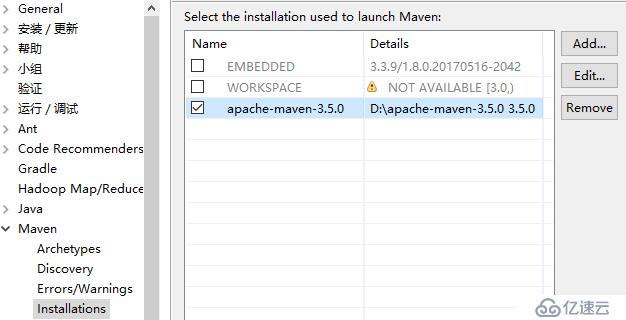

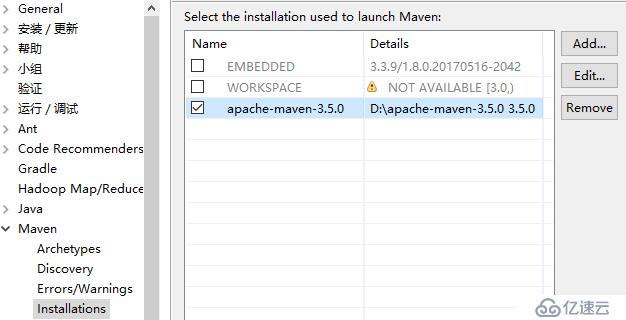

<强> <强>

<强>

import java.io.IOException;

import java.util.StringTokenizer;

,

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

,

public class WordCount {

,

public 才能;static class TokenizerMapper

,,,,,,extends Mapper<对象,文本,文本,IntWritable> {

,,

,,,private final static IntWritable one =, new IntWritable (1);

,,,private Text word =, new 文本();

,,,,

,,,public void 地图(Object 关键,Text 价值,Context 上下文

,,,,,,,,,,,,,,,,,,,),throws IOException, InterruptedException {

,,,,,StringTokenizer itr =, new StringTokenizer (value.toString ());

,,,,,while (itr.hasMoreTokens ()), {

,,,,,,,word.set (itr.nextToken ());

,,,,,,,context.write (,,);

,,,,,}

,,,}

,,}

,

public 才能;static class IntSumReducer

,,,,,,extends Reducer<文本,IntWritable,文本,IntWritable>, {

,,,private IntWritable result =, new IntWritable ();

,

,,,public void 减少(Text 关键,Iterable,价值观,

,,,,,,,,,,,,,,,,,,,,,,Context 上下文

,,,,,,,,,,,,,,,,,,,,,,),throws IOException, InterruptedException {

,,,,,int sum =, 0;

,,,,,for (IntWritable val :值),{

,,,,,,,sum +=, val.get ();

,,,,,}

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null