<> 整体描述:将本地文件的数据整理之后导入到hbase中

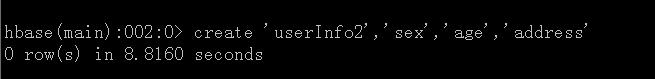

<李> 在hbase中创建表

package com.hadoop.mapreduce.test.map;

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class WordCountHBaseMapper extends Mapper<对象,文本,文本,Text> {

,,,,

,,,public Text keyValue =, new 文本();

,,,public Text valueValue =, new 文本();

,,,//数据类型为:key@addressValue # ageValue # sexValue

,,@Override

,,,protected void 地图(Object 关键,Text 价值,Context 上下文)

,,,,,,,,,,,throws IOException, InterruptedException {

,,,,,,,String lineValue =, value.toString ();

,,,,,,,

,,,,,,,如果(lineValue !=, null) {

,,,,,,,,,,,String [], valuesArray =, lineValue.split (“@”);

,,,,,,,,,,,context.write (new 文本(valuesArray [0]),, new 文本(valuesArray [1]));

,,,,,,,}

,,,}

}

package com.hadoop.mapreduce.test.map;

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class WordCountHBaseMapper extends Mapper<对象,文本,文本,Text> {

,,,,

,,,public Text keyValue =, new 文本();

,,,public Text valueValue =, new 文本();

,,,//数据类型为:key@addressValue # ageValue # sexValue

,,@Override

,,,protected void 地图(Object 关键,Text 价值,Context 上下文)

,,,,,,,,,,,throws IOException, InterruptedException {

,,,,,,,String lineValue =, value.toString ();

,,,,,,,

,,,,,,,如果(lineValue !=, null) {

,,,,,,,,,,,String [], valuesArray =, lineValue.split (“@”);

,,,,,,,,,,,context.write (new 文本(valuesArray [0]),, new 文本(valuesArray [1]));

,,,,,,,}

,,,}

}

减少程序

package com.hadoop.mapreduce.test.reduce;

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.mapreduce.TableReducer;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

public class WordCountHBaseReduce extends TableReducer<文本,文本,NullWritable> {

,,@Override

,,,protected void 减少(Text 关键,Iterable,,, Context )

,,,,,,,,,,,throws IOException, InterruptedException {

,,,,,,,String keyValue =, key.toString ();

,,,,,,,Iterator, valueIterator =, value.iterator ();

,,,,,,,,(valueIterator.hasNext ()) {

,,,,,,,,,,,Text valueV =, valueIterator.next ();

,,,,,,,,,,,String [], valueArray =, valueV.toString () .split (“#”);

,,,,,,,,,,,

,,,,,,,,,,,Put putRow =, new 把(keyValue.getBytes ());

,,,,,,,,,,,putRow.add(“地址”.getBytes (),“baseAddress .getBytes (),,

,,,,,,,,,,,,,,,,,,,,,,,valueArray [0] .getBytes ());

,,,,,,,,,,,putRow.add(“性”.getBytes (),“baseSex .getBytes (),,

,,,,,,,,,,,,,,,,,,,,,,,valueArray [1] .getBytes ());

,,,,,,,,,,,putRow.add(“年龄”.getBytes (),“baseAge .getBytes (),,

,,,,,,,,,,,,,,,,,,,,,,,valueArray [2] .getBytes ());

,,,,,,,,,,,

,,,,,,,,,,,out.write (NullWritable.get (),, putRow);

,,,,,,,}

,,,}

} 主程序

package com.hadoop.mapreduce.test;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.io.Text;

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

null

package com.hadoop.mapreduce.test.map;

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class WordCountHBaseMapper extends Mapper<对象,文本,文本,Text> {

,,,,

,,,public Text keyValue =, new 文本();

,,,public Text valueValue =, new 文本();

,,,//数据类型为:key@addressValue # ageValue # sexValue

,,@Override

,,,protected void 地图(Object 关键,Text 价值,Context 上下文)

,,,,,,,,,,,throws IOException, InterruptedException {

,,,,,,,String lineValue =, value.toString ();

,,,,,,,

,,,,,,,如果(lineValue !=, null) {

,,,,,,,,,,,String [], valuesArray =, lineValue.split (“@”);

,,,,,,,,,,,context.write (new 文本(valuesArray [0]),, new 文本(valuesArray [1]));

,,,,,,,}

,,,}

}

package com.hadoop.mapreduce.test.map;

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class WordCountHBaseMapper extends Mapper<对象,文本,文本,Text> {

,,,,

,,,public Text keyValue =, new 文本();

,,,public Text valueValue =, new 文本();

,,,//数据类型为:key@addressValue # ageValue # sexValue

,,@Override

,,,protected void 地图(Object 关键,Text 价值,Context 上下文)

,,,,,,,,,,,throws IOException, InterruptedException {

,,,,,,,String lineValue =, value.toString ();

,,,,,,,

,,,,,,,如果(lineValue !=, null) {

,,,,,,,,,,,String [], valuesArray =, lineValue.split (“@”);

,,,,,,,,,,,context.write (new 文本(valuesArray [0]),, new 文本(valuesArray [1]));

,,,,,,,}

,,,}

}